Disinformation, Epistemic Fragmentation, and the Future of Trust in Digital Societies

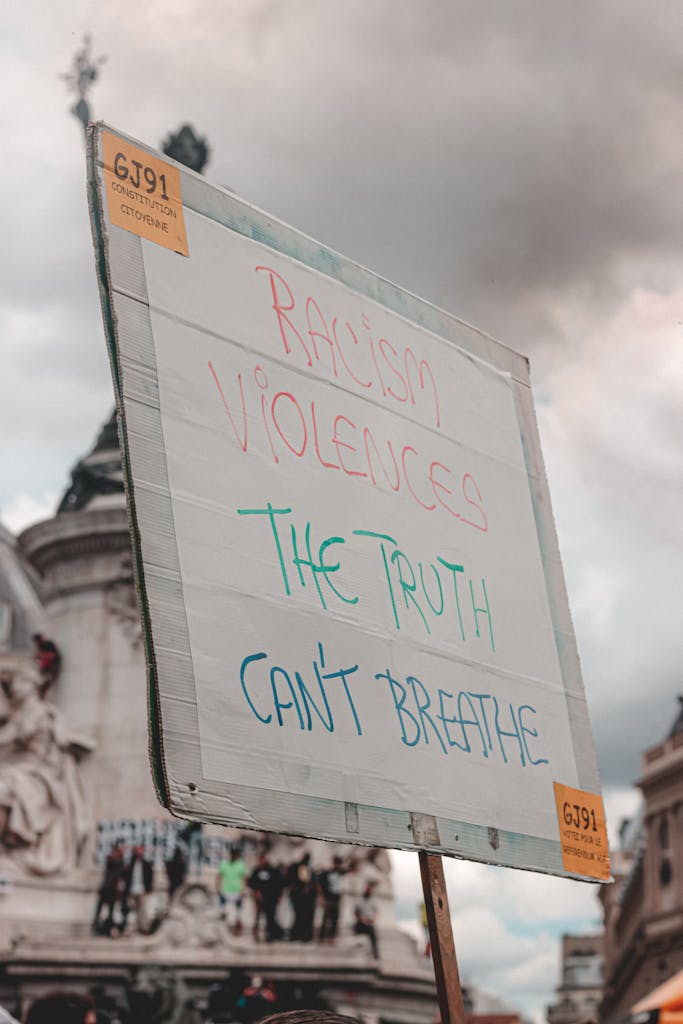

21st-century digital transformations of the information environment have reconfigured how knowledge is produced, validated, and contested. Disinformation is no longer confined to discrete falsehoods or orchestrated state propaganda; it now operates within a participatory and highly networked ecosystem in which information is continuously generated, amplified, and recursively reshaped across digital platforms.

In the United States, approximately 55 percent of online users report concern about their ability to distinguish truth from falsehood, while trust in news hovers at around 40 percent. These figures indicate a larger erosion of epistemic confidence across advanced information societies. In this context, the accelerated dissemination of misleading or manipulative content constitutes not merely a communicative challenge, but a material risk to democratic governance, public trust, and international security.

Crucially, as Eliot Higgins of Bellingcat states, disinformation should not be misconstrued as the genesis of this crisis. Rather, it is symptomatic of deeper structural shifts in how authority, expertise, and verification are organised. As trust in institutions weakens and decentralised communication structures expand, the ways in which societies arbitrate competing truth claims have become diffuse and contested.

Information Disorder, Not Just Falsehood

Public discourse frequently collapses important conceptual distinctions. Disinformation refers to false information disseminated with deliberate intent to mislead; misinformation denotes the inadvertent spread of inaccuracies. Both operate within the broader paradigm of information disorder, which also encompasses “mal-information”: factually accurate material deployed strategically to distort or inflict harm often by moving information intended to stay private into the public sphere.

This distinction is not just semantic. The immediate challenge cannot be reduced to fabricated content alone. Selective omission, strategic constructions, and affective amplification often have greater influence than the outright falsification of facts. Globally, online influencers and digital personalities are now viewed as significant vectors of misleading content alongside national politicians. These perceptions vary geographically: in countries such as Nigeria and Kenya, 59 percent of respondents identify influencers as primary sources; in Western democracies, political actors dominate (57 percent in the United States and Spain). These patterns reveal the hybrid nature of contemporary information ecosystems, in which both institutional and non-institutional actors contribute to epistemic instability.

Democratic Integrity at Risk

Implications for democratic systems are increasingly acute. An effective democracy relies heavily on evidence-based discourse and an informed electorate. With roughly four billion people eligible to vote in major elections within a single year, concerns about the proliferation of election-related misinformation are well founded. Evidence demonstrates that exposure to misleading narratives can exacerbate polarisation, erode confidence in electoral processes, and shape political behaviour in durable ways. We are seeing this behaviour in both pre- and post-election environments.

In Spain, malicious actors constructed a spoof government website ahead of a regional election, falsely warning of terrorist threats at polling stations. In the United States, persistent allegations of electoral fraud, despite evidentiary refutation, have produced enduring scepticism regarding democratic outcomes, with surveys indicating that nearly 40 percent of the public question the legitimacy of the 2020 election. These beliefs have consequences, including harassment of election officials and destabilised institutional trust.

Developments such as these expose an asymmetry: misleading claims can be produced and disseminated rapidly, at minimal cost and at scale, whereas verification processes remain comparatively slow, resource-intensive, and reactive. False information spreads quicker and wider than accurate reporting, in part due to its novelty and emotional salience, creating structural advantages for actors seeking to manipulate information environments for ideological or financial ends. During election periods, when citizens are actively seeking information, this vulnerability is heightened and audiences become more susceptible to strategic exploitation.

Platforms, Incentives, and Epistemic Fragmentation

Algorithmic recommendation systems, optimised for engagement, systemically privilege content that provokes strong affective responses: outrage, fear, or moral indignation. The result is self-reinforcing. Engagement begets visibility, and, in turn, visibility normalises the narratives being amplified.

Over time, this contributes to the formation of epistemic enclaves in which individuals are disproportionately exposed to information that aligns with preexisting beliefs. Traditional gatekeeping institutions, journalism, academia, and expert communities, are consequently disintermediated, while decentralised actors, often without formal credentials, assume a growing role in determining public opinion. Authority fragments, verification becomes contested and trust turns conditional.

This goes beyond informational fragmentation. It erodes the shared standards used to judge what is true. Under such volatile conditions, disagreement extends beyond interpretation to the level of foundational facts themselves, complicating the possibility of collective deliberation.

Can Truth Still Be Defended?

A growing current of scepticism contends that concerns about misinformation are overstated, or that truth claims are indeterminate. Such arguments risk conflating epistemic complexity with epistemic relativism. While some claims resist binary classification, many do not. Many historical events, scientific findings, and empirically verifiable phenomena remain subject to validation.

The challenge lies not in the absence of truth, but in the conditions under which it is recognised and accepted, such as during wartime. Repetition increases the perceived credibility of false claims, even among individuals without strong prior knowledge commitments. Corrective interventions, fact-checking and debunking, are demonstrably effective, yet only partially so, and are often temporally disadvantaged, arriving after initial exposure has already shaped beliefs.

This has prompted increasing attention to proactive strategies. These include “prebunking” approaches that anticipate and pre-empt misleading narratives, psychological inoculation techniques that expose audiences to common manipulation tactics, and platform-level interventions such as accuracy prompts designed to disrupt impulsive sharing. Although individually modest in effect, they can collectively enhance resilience in information environments.

Beyond Reactive Responses

Addressing information disorder requires moving beyond reactive interventions; it demands coordinated efforts across technological and institutional levels. Eliot Higgins and Natalie Martin suggest that frameworks such as VDA (Verification, Deliberation, Accountability) can enhance these efforts. Verification concerns how societies establish what is true; deliberation refers to how information is debated and interpreted in public discourse; and accountability addresses how power is scrutinised and held responsible. Strengthening responses to disinformation therefore depends on reinforcing all three functions, rather than relying solely on post hoc correction.

Strengthening verification ecosystems is one critical priority. This entails sustained investment in investigative journalism, the expansion of open-source intelligence capabilities, and closer collaboration across academia, civil society, and the private sector. While open-source investigation has shown considerable promise in enhancing transparency and accountability, questions persist regarding methodological standards and potential misuse.

Equally important is the pedagogical dimension. In an environment where individuals function simultaneously as consumers, producers, and disseminators of information, competencies in media literacy and evidentiary reasoning are indispensable. The aim is not to turn citizens into professional investigators, but to equip them with the capacity to critically interrogate information and recognise manipulation.

Disinformation is best understood not as an aberration to be eliminated, but as an emergent feature of contemporary information systems. The objective is not the eradication of falsehood, a fundamentally unattainable goal, but the mitigation of its most harmful effects within an open and pluralistic communicative environment. How effectively societies meet this challenge will be decisive in shaping the resilience, and legitimacy, of democratic systems in the digital age.